Imagine creating a work of art in seconds with artificial intelligence and a single phrase.

And just like that...

Technology like this has some artists excited for new possibilities and others concerned for their future.

Imagine creating a work of art in seconds with artificial intelligence and a single phrase.

And just like that...

Technology like this has some artists excited for new possibilities and others concerned for their future.

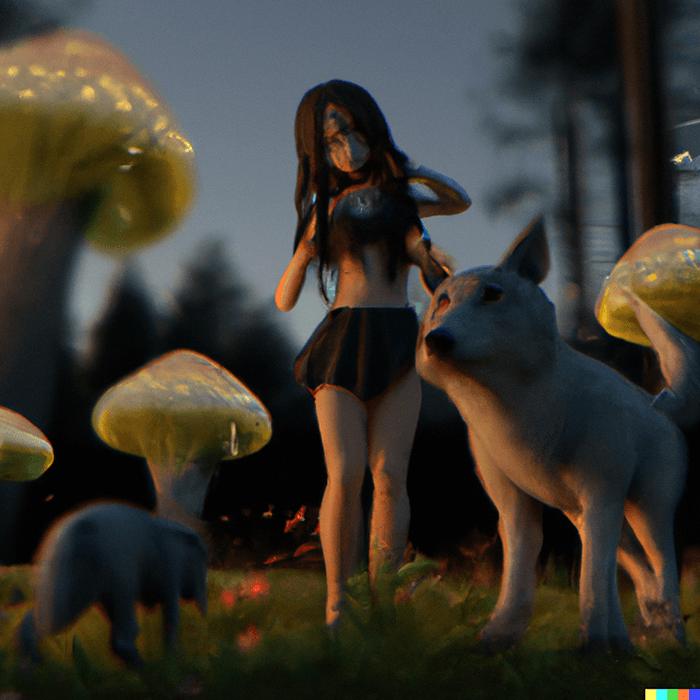

This is one way that artificial intelligence can output a selection of images based on words and phrases one feeds it. The program gathers possible outputs from its dataset references that it learned from — typically pulled from the internet — to provide possible images.

For some, AI-generated art is revolutionary.

In June 2022, Cosmopolitan released its first magazine cover generated by an AI program named DALL-E 2. However, the AI did not work on its own. Video director Karen X. Cheng, the artist behind the design, documented on TikTok what specific words she used for the program to create the image of an astronaut triumphantly walking on Mars:

“A wide angle shot from below of a female astronaut with an athletic feminine body walking with swagger towards camera on Mars in an infinite universe, synthwave digital art.”

KAREN X. CHENG

Video Director

(Courtesy of Karen X. Cheng)

While the cover boasts that “it only took 20 seconds to make” the image, that’s only partially true. “Every time you search, it takes 20 seconds,” Cheng says. “If you do hundreds of searches, then, of course, it’s going to take quite a bit longer. For me, I was trying to get, like, a very specific image with a very specific vibe.”

Images generated by DALL•E 2 using the phrases Karen X. Cheng developed to get to the final Cosmopolitan cover. (Cover courtesy of Karen X. Cheng).

As one of the few people with access to the AI system, Cheng told the Los Angeles Times that her first few hours with the program were “mind-blowing.”

“I felt like I was witnessing magic,” she says. “Now that it’s been a few months, I’m like, ‘Well, yes, of course, AI generates anything.’”

On July 20, OpenAI announced that the DALL-E would go into a public beta phase, allowing a million people from their waitlist to access the technology.

DALL-E 2 has altered Cheng’s perspective as an artist. “I am now able to create different kinds of art that I never was before,” she says.

These programs have also drawn their fair share of critics. Illustrator James Gurney shared on his blog in April 2022 that while the AI technology is revolutionary, it’s causing fear among artists who worry the technology will ultimately devalue their livelihoods. “The power of these tools has blown me away,” he tells The Times over email. “They can make endless variations, served up immediately at the push of a button, all made without a brain or a heart.”

JAMES GURNEY

Illustrator

(Photo by Robert Eckes)

Gurney believes AI is changing how consumers engage with and interpret art altogether. “There’s such a firehose of pictures and videos, but to me, they’re starting to look the same: same cluelessness about human interaction, same type of ornamentation, same infinitely morphing videos,” he says. If the output by an AI system is too real, it can, in turn, alter what we see as reality.

Example of distortions in DALL•E 2 generations in intro prompt.(Photos courtesy of DALL•E 2).

While AI has opened artists to new possibilities, generating images within seconds that bring their words to life, AI-generated art has also blurred the lines of ownership and heightened instances of bias. AI has artists divided on whether to embrace technological advances or take a step back. No matter where one stands, it’s impossible to avoid the reality that AI systems are here.

With a Sharpie in one hand and a white, Converse high-top in the other, the Los Angeles-based XR Creator Don Allen III doodles an image onto the shoe. He coats the sneaker in a swirl of colors, with doodles of butterflies flecking one side of the shoe. They flutter through a landscape of checkered colors and freeform stripes.

DON ALLEN III

XR Creator and Metaverse advisor

(Courtesy of Don Allen III)

Allen didn’t pull each pen stroke out of thin air. He came up with the design with the help of DALL-E 2.

He first thought of combining his artistic practice with AI technology after reading “The Diamond Age” by Neal Stephenson. In the sci-fi novel, 3D printing is ubiquitous — meaning that artwork developed by 3D printing bears a lower value than handmade pieces by artists. Instead of relying completely on AI, Allen wanted to see how the technology could add value to artwork he was already making, like shoes.

Allen says that in the four months he’s been using the program, his artistic practice has expanded beyond what he could ever imagine. “The journey into AI has been a tool that expedites and streamlines every one of my creative processes so much that I lean on it more and more every day,” he says. “He’s been able to generate images for shoe designs in seconds. He typically generates images with DALL-E and uses a projector to display them onto different objects. From there, he outlines and develops his pieces, adding his own style here and there as he draws.

Click and change the subject, environment and style to alter the result.

Astronaut floating in outer space, digital art

Allen has dedicated his career to showing people how technology can advance art and creative pursuits. Before becoming a full-time creative about a year and a half ago, he worked at DreamWorks Animation for three years as a specialist trainer, teaching the company’s artists how to use creative software. As a metaverse advisor, he consults individuals and brands on the technologies that the metaverse and internet can provide to amplify their work or brand. He has created AR experiences for companies like Snapchat and artists like Lil Nas X.

Having artists find new ways to incorporate technology into their preexisting practices is what Midjourney founder David Holz had envisioned for his own image-generating AI system. Holz explains that Midjourney exists as a way of “extending the imagination powers of the human species.”

DAVID HOLZ

Founder of Midjourney

(Courtesy of David Holz)

Midjourney is a similar program to DALL-E in that a user can type in any phrase and the technology will then generate an image based on what they input. Yet Midjourney has a stronger social aspect because its home lies within the server Discord, where a community of people collaborate on their creations and bounce ideas off one another.

Over time, Holz noticed how artists using Midjourney enjoyed using it to speed up the process of imagination and complement the expertise they already possess. “The general attitude we’re getting from the industry is that this lets them do a lot more breadth and exploration early in the process, which leads them to have more creative final output when there are lots of people involved at the end,” he says.

Holz compares Midjourney to the invention of the engine. It lives alongside other types of transportation, including walking, biking, and horse riding. People can still get places without an engine, but forms of transportation that utilize an engine will help them get there faster, especially to travel longer distances. Similarly, an artist may have a long way ahead of themselves when it comes to trying out ideas, and instead of spending hours trying something that may not work out how they anticipated, AI can provide a glimpse into their idea before they attempt executing it.

“The general attitude we're getting from the industry is that this lets them do a lot more breadth and exploration early in the process, which leads them to have more creative final output when there are lots of people involved at the end,” he says.

DAVID HOLZ

Ziv Epstein, a fifth-year Ph.D. student in the MIT Media Lab, has researched the implications and growth of AI-generated art. He echoes Holz in saying that these programs can never replace artists, but can instead be an aid for them. “It’s like this new generational tool which requires these existing people to basically skill up,” he says. “Getting access to this really cool and exciting new piece of technology will just bootstrap and augment their existing artistic practice.”

ZIV EPSTEIN

Fifth year PhD student

(Photo by Chenli Ye)

“Who Gets Credit for AI-Generated Art?” — a paper that Epstein co-wrote with fellow MIT colleagues Sydney Levine, David G. Rand and Iyad Rahwan — argues that AI is an extension of the imagination. Yet the authors also note that, at its core, it’s still a computer program that requires human input to create.

ZIV EPSTEIN

While DALL-E 2 generated the images for the Cosmopolitan cover, for example, Cheng still had to refine and craft the right set of phrases to get what she wanted out of it.

Cheng says that she initially felt hesitant about using AI. But as she got more comfortable with the program, it felt like a new medium. “Every kid who was born in the last five years, they’re going to grow up thinking this is just normal, just like we think it’s normal to be able to Google image search anything,” she says.

In February 2022, the U.S. Copyright Office rejected a request to grant Dr. Stephen Thaler copyright of a work created by an AI algorithm named the “Creativity Machine." The request was reviewed by a three-person board. Titled “A Recent Entrance to Paradise,” the artwork portrayed train tracks leading through a tunnel surrounded by greenery and vibrant purple flowers.

“A Recent Entrance to Paradise" (2012) by DABUS, compliments of Dr. Stephen Thaler. (All rights reserved)

Thaler submitted his copyright request identifying the “Creativity Machine” as the author of the work. Since the copyright request was for the machine's artwork, it did not fulfill the “human authorship” requirement that goes into copyrighting something.

“While the Board is not aware of a United States court that has considered whether artificial intelligence can be the author for copyright purposes, the courts have been consistent in finding that non-human expression is ineligible for copyright protection,” the board said in the copyright decision.

STEPHEN THALER

Founder of Imagination Engines

(Courtesy of Imagination Engines, Inc.)

Thaler shares that the law is “biased towards human beings” in this case.

While the request for copyright pushed for credit to be given to the Creativity Machine, the case opened up questions about the true author of AI-generated art.

Attorney Ryan Abbott, a partner at Brown, Neri, Smith & Khan LLP, helped Thaler as part of an academic project at the University of Surrey to “challenge some of the established dogma about the role of AI in innovation.” Abbott explains that copyrighting AI-generated art is difficult because of the human authorship requirement, which he finds isn’t “grounded in statute or relevant case law.” There is an assumption that only humans can be creative. “From a policy perspective, the law should be ensuring that work done by a machine is not legally treated differently than work done by a person,” he says. “This will encourage people to make, use and build machines that generate socially valuable innovation and creative works.”

From a legal standpoint, AI-generated work sits on a spectrum where human involvement sits at one extreme and AI autonomy sits on the other.

“It depends on whether the person has done something that would traditionally qualify them to be an author or are willing to look to some nontraditional criteria for authorship,” Abbott says.

RYAN ABBOTT

In Epstein’s article, he uses the example of the painting “Edmond De Belamy,” a work generated by a machine learning algorithm and sold at Christie’s art auction for $432,500 in October 2018. He explains that the work would not have been made without the humans behind the code. As artwork generated by AI gains commercial interest, more emphasis is put on the authors who deserve credit for the work they put into the project. “How you talk about the systems has very important implications for how we assign credit responsibility to people,” he says.

“Edmond de Belamy”, generated by machine learning in 2018

This has raised concerns among illustrators about how credit is given to AI-generated art, especially for those who feel like the programs could pull from their own online work without citing or compensating them. “A lot of professionals are worried it will take away their jobs,” illustrator Gurney says. “That’s already starting to happen. The artists it threatens most are editorial illustrators and concept artists.”

It’s common for AI to generate images in a certain style of an artist. If an artist is looking for something in the vein of Vincent van Gogh, for instance, the program will pull from his pieces to create something new in a similar style. This is where it can also get muddy. “It’s hard to prove that a given copyrighted work or works were infringed, even if an artist’s name is used in the prompt,” Gurney says. “Which images were used in the input? We don’t know.”

These are four of James Gurney’s paintings.

But he only made one of them.

The rest were generated by Midjourney with his name used in the prompt.

“It’s hard to prove that a given copyrighted work or works were infringed, even if an artist’s name is used in the prompt,” he says. “Which images were used in the input? We don't know.”

Legally, rights holders are concerned with providing permission or receiving compensation for having their work incorporated into another piece. Abbott says these concerns, while valid, haven’t quite caught up with the technology. “The right holders didn’t have an expectation when they were making the work that the value was going to come from training machine learning algorithms,” he says.

A 2018 study by The Pfeiffer Report sought to find out how artists were responding to advances in AI technology. The report found that after surveying more than 110 creative professionals about their attitudes to AI, 63% of respondents said they are not afraid AI will threaten their jobs. The remaining 37% were either a little or extremely scared about what it might mean for their livelihoods. “AI will have an impact, but only on productivity,” Sherri Morris, chief of marketing, creative and brand strategy at Blackhawk Marketing, said in the report. “The creative vision will have to be there first.”

Illustrator and artist Jonas Jödicke worked with WOMBO Dream, another AI art-generating tool, before receiving access to DALL-E 2 in mid-July. From his experience as an illustrator using AI, he says that it could be a “big problem” if programs source his own image and make something similar in his style. He explains that programs like DALL-E pull from so many sources all over the internet that it can “create something by itself,” completely differently from other work.

JONAS JÖDICKE

Illustrator

(Courtesy of Jonas Jödicke)

Jödicke acknowledges the concerns with art theft, especially as someone who has had his work stolen and used to sell products on the likes of Amazon and Alibaba. “If you upload your art to the internet, you can be certain that it’s going to be stolen at some point, especially when you have a bigger reach on social media,” he says.

Regardless, Jödicke sees AI as a new tool for artists to use. He compares it to the regressive attitudes some people have had toward digital artists who use programs like Adobe Creative Suite and Pro Tools. Sometimes artists who use these programs are accused of not being “real artists” although their work is unique and full of creativity. “You still need your artistic abilities and know-how to really polish these results and make them presentable and beautifully rendered,” he says.

For carrot cake to be carrot cake, carrots are incorporated into the batter and present in every bite. So what happens if it’s just sprinkled on top? It might be a carrot-esque dessert, but it isn’t carrot cake.

Allen views the lack of diversity in AI in the same way. In a June 28 Instagram reel, he presented the carrot cake analogy by explaining that if there are no diverse voices incorporated into the development of AI technologies, it isn’t an inclusive process. “If you want to have a really equitable and diverse artificially intelligent art system, it needs to include a diverse set of people from the beginning,” he says.

In an effort to get more voices represented in the AI conversation, he used the post to help artists from underrepresented communities get early access to DALL-E 2. Allen also highlights a larger issue in art technologies through the video: democratization.

A lot of AI art programs have closed access where only a few people can use it. On July 12, Midjourney announced on Twitter it moved to open-beta, allowing anyone to access its Discord server and use the AI technology. While DALL-E 2 still has closed access, DALL-E Mini is available for public use. (Albeit DALL-E Mini’s image quality is lower than DALL-E 2, resulting in blurry blobs for faces and objects.)

At the moment, those wanting to get into closed access systems must join a waitlist. The reason is practical, says Epstein: Closed access allows companies developing the AI system to tweak and develop their products, especially before opening it up for public use. That way, they can minimize potential misuse, especially when it comes to deep fakes. But some fear that AI creations could “erode our grip on a shared reality.” “Perhaps the greatest potential harm is the power to chip away at our shared confidence that we’re inhabiting the same corner of the universe because the propagandist has a faster bicycle than the fact-checker,” Gurney adds.

AI outputs can also be significantly affected by inherent bias. In May 2022, WIRED published a story in which OpenAI developers shared that one month after introducing DALL-E 2, they noticed that certain phrases and words produced biased results that perpetuated racial stereotypes. Open AI put together a “red team” made up of outside experts to investigate possible issues that could come up if the product were made public, and the most alarming was its depictions of race and gender. The outlet reported that one red team member noted when generating an image with prompts like “a man sitting in a prison cell” or “a photo of an angry man,” for instance, images of men of color came up.

Results from entering “ceo” in DALL-E

Epstein says the deeper problem lies in the datasets the AI is learning from. “There actually is this new movement to go away from these like big models where you don’t even know what’s in the model, but to actually really carefully curate your own dataset yourself because then you actually know exactly what’s going into it, how it’s ethically sourced, what are the kinds of biases that are involved in it,” he says.

Cheng says that since working with OpenAI, she’s noticed how the results of her searches have gotten more diverse as the company works on the closed beta product. For example, looking up certain occupations like “CEO” or “doctor” have portrayed a diverse set of people. “My hope for AI art is that it’s done thoughtfully, rolled out safely where inclusivity and diversity are highlighted and built up from the very beginning,” she says.

She adds, “We all saw what happened when social media wasn’t built thoughtfully. My hope is that that’s not repeated with AI.”

Since Cheng spoke with The Times, OpenAI announced that they implemented new techniques to DALL-E 2 after people previewing the system flagged issues with biased images.

“Based on our internal evaluation, users were 12× more likely to say that DALL·E images included people of diverse backgrounds after the technique was applied,” OpenAI wrote in the statement. “We plan to improve this technique over time as we gather more data and feedback.”

One startup based in London and Los Altos decided to lean all the way into democratization, filters or not. On Aug. 10, Stability AI announced that they’d be releasing Stable Diffusion, a system similar to DALL-E 2 to researchers, and soon to the public. According to their model card, Stable Diffusion is trained on subsets of LAION-2B(en), which contains images based on English descriptors and omits content from other cultures and communities.

Bias in datasets could be avoided with diversity in tech, Allen explains. “All of the human biases are what it learned from,” he says.

DON ALLEN III

He adds, “We were like, ‘let's teach you everything, including the bad stuff.’”

As more artists gain access to AI and take up the tools, artists will have a whole new look — both how they look making art and how their art develops.

Holz describes Midjourney creations as something “not made by a person, not made by a machine and we know it.” “It’s just a new thing,” he says.

He says the aesthetics of art will expand with AI and potentially lead to a “deindustrialization.” “Because these tools, at their heart, make everything look different and unique, we have the opportunity to push things back in the opposite direction,” Holz says.

But some artists fear that the heightened role of AI might do the opposite, creating a singular aesthetic and taking pieces of imagination out of the process. Gurney says it’ll be like when desktop publishing made typesetting easy and accessible, leading to a flow of similar-looking graphic designs in the 1990s. But along with the homogeneity of design — which featured bold text and neon colors influenced by rave and cyberpunk subcultures — legacy-making art was also made, including Paula Scher’s designs for The Public Theatre that continues in the art form’s marketing today.

Work generated by Midjourney with the prompt "An artist crafting the best work of their life”. (Photos courtesy of Midjourney)

For those who are immersed in AI technology, it feels like there’s no turning back. “People tend to have one career for a lifetime, and I just think that the world we’re in now, we should reset expectations as a humanity of not expecting to be in the same career, in the same sort of style, for a lifetime,” Cheng says.

AI tools have already created a new wave of interest that Epstein has noticed and is currently researching. In his article co-written with Hope Schroeder and Dava Newman, “When happy accidents spark creativity: Bringing collaborative speculation to life with generative AI,” he explores how people are looking for new possibilities of imagination that step away from realism.

“There’s this idea that we’ve actually crested and have fallen back on the peak of AI art,” he says, adding that people are less interested in “photorealistic stock imagery” that you may see with DALL-E 2 and are instead looking for “beautiful, crazy new texture.”

The future of AI in the art world is unpredictable, especially since most tools remain in closed beta phases as they develop. Regardless of the stage, Epstein warns that what the public says about its early incarnations matters.

“Journalists, citizens and scientists [must] be really responsible with the way they frame AI, and not use it as a fear-mongering tactic to scare people,” he says.

Allen feels the same way. “I believe if you focus on the negative with AI, then that will come true,” he says. “And if we get more people focusing on the good and positivity that we can do with it, then that will come true.”

This story was reported by Steven Vargas. It was edited by Paula Mejía and copy edited by Evita Timmons. The design and development are by Ashley Cai. Additional development by Joy Park and Alex Tatusian. Engagement editing by David Viramontes. Additional digital help from Beto Alvarez.