From the Archives: What Nash’s ‘Beautiful Mind’ really accomplished

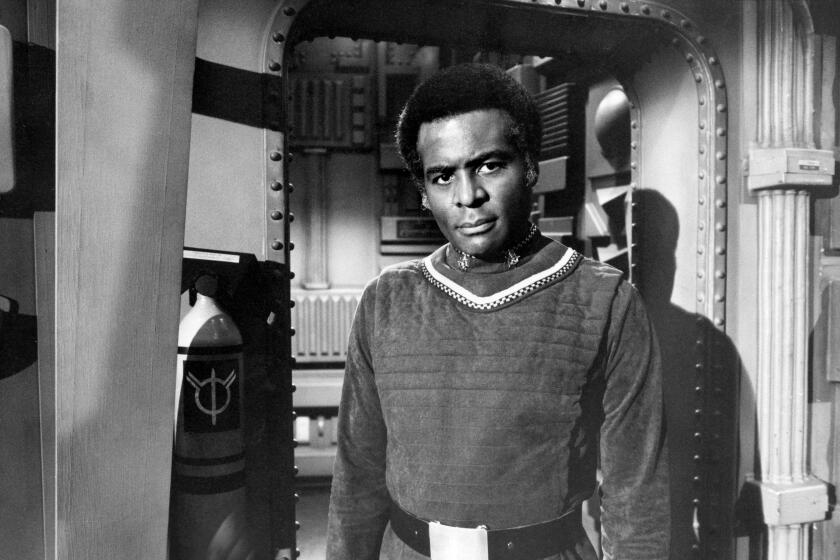

John Nash shown in 2008 attending a so-called ‘Meeting for Extraordinary Minds’ in Brescia, Italy.

When I was a Princeton undergraduate from 1995 to 1999, professor John Forbes Nash Jr., the subject of the movie “A Beautiful Mind,” was a shadow figure in a knit cap who haunted the Fine Hall math department. Nash sightings--at the Dinky train station, in the Small World coffee shop, on his slowly looping bicycle rides--were a regular pastime.

We called Nash the “Phantom of Fine Hall”--he had stopped teaching by that point--and whispered in wonder that this aging man in goggle eyeglasses and faded Jack Purcell shoes had won a Nobel Prize for economics.

“A Beautiful Mind” is bringing deserved mainstream recognition for Nash’s long struggle to overcome schizophrenia. But moviegoers will leave the film with little sense of Nash’s mathematical genius, save knowing that his contribution to modern economics can help pick up a girl at a bar.

It is axiomatic in mathematics that genius blossoms early, and so students entering Princeton’s math department, one of the world’s best, are seeking the next great insight. As rendered in “A Beautiful Mind,” when prodigy Nash arrived at Princeton, he searched for patterns in pigeons’ pecking. Nash found his stroke of genius at age 21 in a 27-page dissertation on game theory that would fundamentally revolutionize modern economics and eventually win him a Nobel.

Before Nash, economists studied markets using sophisticated versions of Adam Smith’s price theory--basic supply and demand. Smith said that as buyers and sellers pursue their self-interest, the “invisible hand” of the market distributes products efficiently. But price theory can’t explain the abundant real-world examples of market inefficiency. Nash approached this problem by reformulating economics as a game.

To most people, a game is a way to while away a rainy afternoon. But to mathematicians, a game is not simply chess or poker, but any conflict situation that forces participants to develop a strategy to accomplish a goal. To mathematicians, a game is a regimented world where math is king. And a game can be a window to mathematical insight.

When I was a student, every afternoon, Princeton math professors collected in the third-floor lounge for tea and a round of backgammon. John Conway, a math professor, told us in one lecture that he stumbled on the discovery of a lifetime while studying Go, an ancient board game played with smooth stones.

Nash’s central insight, the one for which he won a Nobel Prize in 1994, was to prove that every economic game has an equilibrium point--that is, an approach to play in which no player would choose to change his strategy. If a player were to try to change his Nash equilibrium strategy, he would end up worse off than before.

Nash showed that even complicated games have an equilibrium point. He proved that participants in complex economic situations tend toward a specific, secure strategy, and he provided an elegant mathematical framework for figuring out that strategy. That apparently simple insight transformed game theory from an abstract subset of mathematics to a powerful new approach to the study of economic decision-making.

Nash built on previous insights, particularly those of John von Neumann, another brilliant and brash mathematician who was a professor at the Institute for Advanced Study near Princeton when Nash was a graduate student.

(A brief note here on eccentricity and math genius--the two seem to go together. I recall my first class with Conway, a rumpled man in loose jeans who brushed aside the classroom door and flung off his sandals, which smacked against the wall. Barefoot, he turned to the blackboard and began writing equations, pausing only to survey his work with a mumble or wipe chalk handprints on his jeans. Fifty minutes later, Conway put down his chalk, put on his sandals and walked out. That was our first lecture ever as Princeton freshmen. Half the class had dropped out by the next lecture.)

Like the shoe-shucking Conway, Von Neumann was an eccentric instructor. Instead of using the whole blackboard, Von Neumann would write an equation, then immediately erase it and write another as students frantically took notes. “Proof by erasure,” his students called it.

The same year Nash was born, 1928, Von Neumann showed that in simple games like checkers or football, where one player’s gain is the other’s loss, rational players will tend toward a strategy that even in a worst-case scenario, guarantees a minimum gain or caps a potential loss. That translates to conservative play--to a brand of football in which teams prefer the extra point to the two-point conversion and always punt on fourth and inches.

So how would this theory play out in the real world? Here’s an example taught in classes:

Two gas stations are being built in a small town made up of a single, one-mile long Main Street. Town planners agree that the gas stations should be placed at one-quarter and three-quarter mile marks, so that no one in town has to drive more than a quarter mile to fill up. And since residents are distributed evenly along Main Street, both stations would share exactly half the business in town.

But try explaining that to the station owners. The owner who should build at the one-quarter mile mark knows people at his end of town will never go to the competing station because it’s too far away. So he’d want to build closer to the center of town to dip into his competitor’s mid-town market. Of course, the other owner is equally wily and he too edges his station closer to the center of town. Game theory tells us--and an astute business sense dictates--that the two gas stations will both end up on the same corner in the exact center of Main Street.

The equilibrium solution of the gas station game is clearly not the most efficient. While the stations still share half the town’s business, people on the edge of town have to drive farther to get gas than under the town planners’ solution. But neither owner would have it any other way, because being in the center of town is the most secure solution for each. This equilibrium is an example of free market inefficiency--and a critique of Adam Smith’s invisible hand.

When Von Neumann first heard Nash’s ideas, he told Nash they were “trivial”--the math world’s harshest put-down. But over the years, even as Nash dropped math in his struggle with schizophrenia, his ideas took hold. These days, Nash-style strategic thinking can be found everywhere.

Consider America’s nuclear standoff with the Soviet Union. Each superpower could have disarmed or stockpiled a nuclear arsenal. To decide, the U.S. considered what the Russians might do. If they armed, the U.S. needed to arm as well to defend itself.

But if the Soviet Union disarmed, the U.S. would rather arm itself anyway to achieve a strategic advantage over its enemy. The Soviet Union’s thinking ran the same way, and both countries settled on a policy of mutually assured destruction. Despite pacifists’ complaints, a nuclear standoff turned out to be the most stable and secure solution--the Cold War’s Nash equilibrium.

Professor Conway taught us that math is not the sawdust we learned in grade school, but a passionate, and personal, subject. One class, he told us that while searching for patterns in Go, he discovered surreal numbers: an entirely new class of numbers able to express the infinitely small and infinitely large with an unprecedented specificity. That semester, Conway taught us that the thing in math is the search--whether it is the pecking of pigeons or the placement of Go stones.

For, as Nash and Conway both prove, you never know what you might find.

Daniel A. Grech is a former Princeton student who now works as a reporter at the Miami Herald.

More to Read

Start your day right

Sign up for Essential California for the L.A. Times biggest news, features and recommendations in your inbox six days a week.

You may occasionally receive promotional content from the Los Angeles Times.