Professor creates iPhone app capable of screening for skin cancer

A new app created by a professor at the University of Houston is capable of screening patients for skin cancer within seconds, with an 85% accuracy rate.

DermoScreen, as the app is called, is still undergoing testing, but if it is eventually approved and released, it could allow patients to be quickly and inexpensively screened for melanoma.

The app works after connecting an iPhone to a $500 dermoscope attachment, which magnifies what the iPhone’s camera can see. After that, the user takes a photo of a suspicious mole or lesion and runs it through DermoScreen. The app then determines if a patient might have cancer, and if so, it refers the patient for a follow-up.

DermoScreen’s accuracy rate is on par with dermatologists and higher than that of primary care physicians, according to the University of Houston.

The app was created by George Zouridakis, a professor of engineering technology at the University of Houston, who began working on DermoScreen in 2005. The app is receiving further evaluation at the University of Texas M.D. Anderson Cancer Center.

“We are in early stages of planning and approval for this project, but such an application, if validated, has the potential for widespread use to ultimately improve patient care,” said Ana Ciurea, an assistant professor of dermatology at M.D. Anderson, in a statement.

DermoScreen is the latest example of smartphone apps that are making it easier for people to access healthcare.

In March, researchers at the Stanford University School of Medicine created EyeGo, a technology similar to DermoScreen, that makes it easy for smartphone users to receive eye-care services. In that case, a trained user attaches an adapter to a smartphone that can capture high-quality images of the front and the back of a patient’s eye. The image is then shared with other health practitioners.

“Think Instagram for the eye,” said Robert Chang, an assistant professor of ophthalmology and one of the developers of the Stanford technology, in a statement.

Stanford’s smartphone eye technology would replace expensive equipment that often costs thousands of dollars and is often not available in rural or developing areas.

Along with universities, established tech giants are also optimizing their latest devices with more healthcare capabilities.

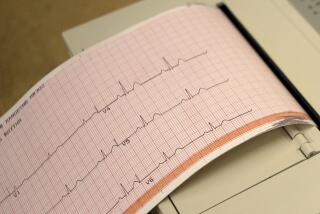

Samsung, for example, released four devices in April -- the Galaxy S5 smartphone, two smartwatches and a fitness tracking band -- that each include a pedometer and a heart rate sensor. Apple, meanwhile, has been on a medical expert-hiring binge and is reportedly developing an app that would make it easy for iPhone owners to track their health.