Four takeaways from AlphaGo’s victory over a world champion Go player

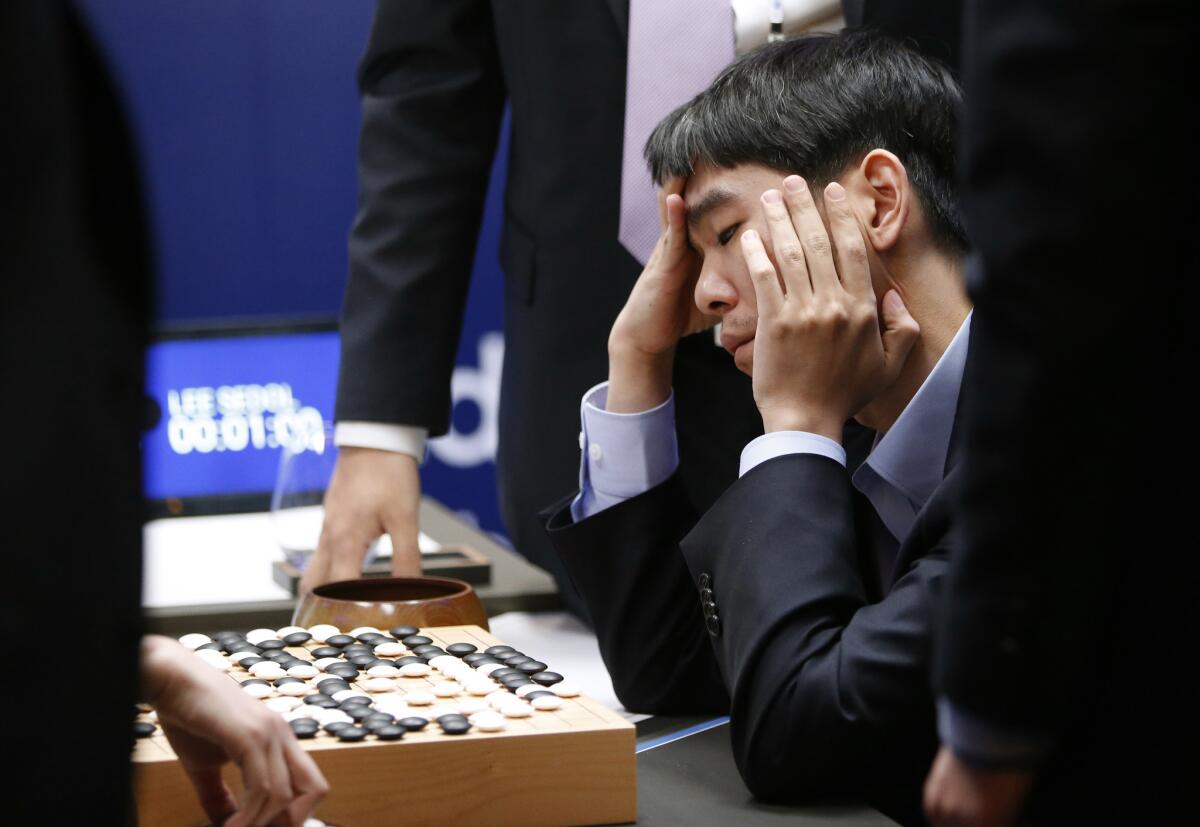

South Korean Go player Lee Sedol reviews the match after finishing the Google DeepMind Challenge Match against Google’s artificial intelligence program, AlphaGo, in Seoul, South Korea, on March 15, 2016. Google’s Go-playing computer program defeated its human opponent in a 4:1 victory.

- Share via

With a decisive victory over a world champion Go player, a computer program not only mastered what may be the world's most complex board game, it also changed the scope of future AI research.

Google DeepMind's AlphaGo defeated Lee Sedol for the fourth time in a five-game match, wrapping up the much-publicized contest with a closely fought game that went into overtime on Tuesday. AlphaGo overcame what the Verge called a "bad mistake" early on in the game to clench victory.

Go, a complicated board game from ancient China, has long been seen as the benchmark for board game testing of AI. Go is especially challenging because there are many more possible moves than in chess or checkers.

RELATED: Computer program emerges victorious in five-game match against Go champion

Go is just a game, but AlphaGo's victory is a sign of more than just board game dominance. Here's what the win means:

It's not the end of humanity

Some have reacted to the news of AlphaGo's victory with trepidation or even fear, but AI experts say the technology is still mostly developed for narrow purposes.

That means AlphaGo isn't sentient and won't be able to suddenly decide one day that it wants to learn a completely new skill.

But it's probably the end of board games

Computers have bested humans in checkers, chess and now, Go.

Board games were long viewed as the perfect test for AI because they have clear rules and nothing is hidden from players. Researchers look to see if the computer programs learn from past mistakes and determine the next best move.

Now, AI will likely move on to "incomplete information games" including certain types of poker, which will force the computer to interpret actions as signals and figure out the next best move without knowing what cards an opponent holds.

Others have suggested video games like "Starcraft" or "League of Legends," which are even more complicated. The Seattle-based Allen Institute for Artificial Intelligence is even putting its AI through standardized testing.

The lessons learned with AlphaGo can be applied to real-world jobs

AlphaGo is powered by neural network AI engines, which allows the program to learn by watching the best Go players and then train itself to get even better.

Though AlphaGo can only play Go, the fact that it can train itself to improve could be applied to other tasks, such as teaching itself to recognize faces by looking at lots of images.

AI can also help people look through large databases and perform calculation, as it does in "complete information games" like chess or Go. In "incomplete information games," it could be used in situations where there are more unknown factors, such as negotiations or cybersecurity.

It's time for researchers to think about the risks of AI

Michael Cook, an AI researcher at Goldsmiths, University of London, noted in an op-ed in the Guardian that AI inherits human biases because it is developed by humans.

"When those humans are primarily white, male, middle-class computer scientists then that causes further problems," he wrote. "Right now it’s innocuous slip-ups, like not noticing that your selfie analyser is regurgitating your data’s white, Western standards of beauty."

That means researchers risk multiplying their own flaws if they're not careful about what they use AI for and don't think more broadly about how it can be used.

For more business news, follow @smasunaga.

MORE FROM BUSINESS

Satellite giant Dish sues NBC, alleging breach of contract

Chinese hotel firm buys Yamashiro property in Hollywood Hills

Tribune Publishing's bid for O.C. Register faces antitrust hurdles, DOJ says

Inside the business of entertainment

The Wide Shot brings you news, analysis and insights on everything from streaming wars to production — and what it all means for the future.

You may occasionally receive promotional content from the Los Angeles Times.