Father Figures : Early Architects of the Internet and Web Look to the Future

- Share via

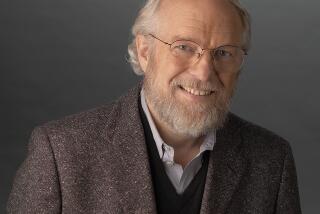

Tim Berners-Lee didn’t set out to create the World Wide Web.

While working at CERN, the European Laboratory for Particle Physics near Geneva, the British engineer wrote a program for himself called Enquire that could store and recall information based on random associations. Nine years later--in 1989--Berners-Lee proposed a plan to link information on computers around the world through a web of hypertext links. The first World Wide Web program debuted on the Internet in the summer of 1991.

The rest, as they say, is history.

With an estimated 75 million people around the world using the Web--give or take 5 or 10 million--maintaining the loosely governed computer network is a full-time job. Berners-Lee has spent the last four years serving as director of the World Wide Web Consortium at MIT’s Laboratory for Computer Science in Cambridge, Mass. The international industry consortium, also known as W3C, continues to improve the Web by developing new software protocols and maintaining open standards.

Just last week, Berners-Lee won a prestigious MacArthur Fellowship for pioneering “a revolutionary communications system requiring minimal technical understanding to locate and distribute information throughout the world at very low cost.”

But the modest 43-year-old insists that he--and the Web--are just getting started. In an interview with The Times, Berners-Lee offered a preview of coming attractions.

*

Question: Why is it so hard sometimes to find useful information on the Web?

Answer: There’s a whole lot of stuff out there that you’re not interested in. That’s because the Web was designed to be a mirror of the world, and there are a whole lot of things out there in the world that you’re not interested in.

*

Q: Do you see any improvements on the horizon?

A: If you create a hyperlink page, you’re suggesting to readers of one page that they should go out and read another page. Those suggestions are very powerful. It’s the links which are actually creating order on the Web.

There are some very interesting developments coming out of current research which are getting quite dramatic results. There’s a new wave of programs that instead of trying to read the Web pages--which of course is difficult for computers because they can’t understand Web pages--they are starting to use the links to realize which pages might be relevant.

It starts off by doing a keyword text-based search to find a group of sites about, say, canoes. Then it finds all of the pages that have pointers to any of those pages. Then it looks for patterns within those thousands of pages to find about 10 sites that are linked to the most. So if you ask a question like “Where can I find out about canoeing?” it will take you to the International Canoeing Assn. page, which is the place that everyone has linked to.

*

Q: That sounds like a really good idea. What took them so long?

A: For a lot of people, the first agenda item was to make something so that human beings could transfer all of their ideas to the Web. Once we have that unmanageable mass, the second phase is to address it with a computer program. The chaos of life is still the chaos of life, but for the first time--because it’s accessible through the Web--we can write a program to analyze it.

*

Q: Is there other technology on the horizon that could improve the Web?

A: We have a consortium project called HTTP-NG [the “NG” stands for Next Generation] that is looking at a complete soup-to-nuts redesign of HTTP [hypertext transfer protocol, the basic architecture of the Web].

For example, we often have problems when somebody puts something on a Web server that’s very, very popular, because then the Web server becomes overloaded. What the system ought to be doing is transferring copies of that file all over the place. With HTTP-NG, the system will automatically start to make copies of your documents and move them out if it discovers that your document is very popular.

*

Q: What else will HTTP-NG do?

A: Suppose you are putting a car up for sale. Instead of just putting up a Web page, you fill out a form that says, “This is for selling a motor vehicle in the state of California.” The moment you put that on the Web and the search engines index it, it can say, “Ah, this is an offer for sale,” and it will pass it off to a broker, who will look for requests for this item. You may have a couple of autonomous agents coming at you on behalf of human operators somewhere saying: “Hey, you want to sell a car? I’ve got some people who want to buy a car. Give me $10, and I’ll tell you where they are.”

*

Q: What other changes have you got in store?

A: Whereas phase 1 of the Web put all the accessible information into one huge book, if you like, in phase 2 we will turn all the accessible data into one huge database.

This will have a tremendous effect on e-commerce. You could say, “Find me a company selling a half-inch bulb to these specifications,” and a program will go through all the catalogs--which may be presented in very different formats--and figure out which fields are equivalent and then build a database and do a comparison very quickly. Then it will just go ahead and order it.

It would be a real mistake for anyone to think the Internet is done or the World Wide Web is done. We’re just at the start of these technologies, and there’s a huge amount of research to be done. It’s very important for the government to keep funding it.

*

Times staff writer Karen Kaplan can be reached at karen.kaplan@latimes.com.