Autonomous car developers lobby to defang safety data regulations

- Share via

The fast-rising autonomous vehicle industry is lobbying federal safety regulators to limit the amount of data companies must report every time their cars crash, arguing that the current requirements get in the way of innovation that will benefit the public.

The industry’s efforts to make driving safer and more accessible are at risk of being “drowned out by misinformation, inflation or dubious data without context” under reporting rules issued last summer by the National Highway Traffic Safety Administration, says Ariel Wolf, general counsel for the industry lobbying group Self-Driving Coalition for Safer Streets. Among its members: Alphabet-owned Waymo, Argo, Ford, General Motors, Cruise, Volvo, Aurora, Motional, Zoox, Uber and Lyft.

“We are desperate to share information,” Wolf told The Times. But that doesn’t include information the companies believe could be misinterpreted by the public, and certainly not information those companies deem proprietary.

Of special concern is the requirement that autonomous vehicle companies provide the agency with a monthly report listing every crash involving one of their vehicles in which the driverless function was engaged within 30 seconds of the crash. That puts an onerous burden on the companies to produce “a large number of insignificant reports,” according to a 13-page letter Wolf’s group wrote to Ann E. Carlson, NHTSA’s chief counsel. Sent Nov. 29, the letter laid out objections to major elements of the agency’s data order.

“The reporting process may generate flawed data while placing a heavy reporting burden on [automated vehicle] developers that unintentionally impedes deployment of safety-enhancing AV technology,” the coalition said in a separate media release.

Safety advocates are quick to dismiss such arguments. They see a familiar pattern of the automobile and technology industries pushing back on calls for more transparency that could help society weigh the benefits and the dangers of new technologies — including those that pilot multi-ton vehicles at high speeds on crowded roads.

Automated vehicles could indeed lead to consequential reductions in crashes, injuries and deaths. But more information, not less, is essential to track progress toward that goal and to prevent half-baked technology from threatening the public, the safety groups say.

“It is no secret that we believe there can be significant gains in vehicle safety for drivers, passengers and all vulnerable road users by standardizing and mandating existing advanced vehicle safety technology,” a coalition of six auto safety groups wrote in their own letter to NHTSA shortly after the data order was issued. However, the coalition said, “There should be no delay in data collection to help ensure these advances are working safely.”

The fight over autonomous vehicle crash data is a harbinger of fiercer debates to come as humans increasingly interact with robots and artificial intelligence technology increasingly pervades the culture, the economy and everyday life.

“We are starting to set important legal precedents as robotic technology enters into shared spaces, and I’m concerned that lobbying forces or human biases will unduly influence regulation,” said Kate Darling, a researcher at MIT’s Media Lab and author of “The New Breed: What Our History With Animals Reveals About Our Future With Robots.” “When robots and humans are interacting together, it’s important to step back and look at the whole system. Otherwise we risk favoring technology, and those who deploy it, to the detriment of the public good.”

While regulators investigate a spate of Teslas steering themselves into parked vehicles, Tesla owners have been reporting faulty collision-avoidance systems.

The current crash data collection system was developed half a century ago and much of it remains paper-based, diffused across police departments nationwide. Beyond injury and death numbers, the detail the government can collect on crashes is scarce.

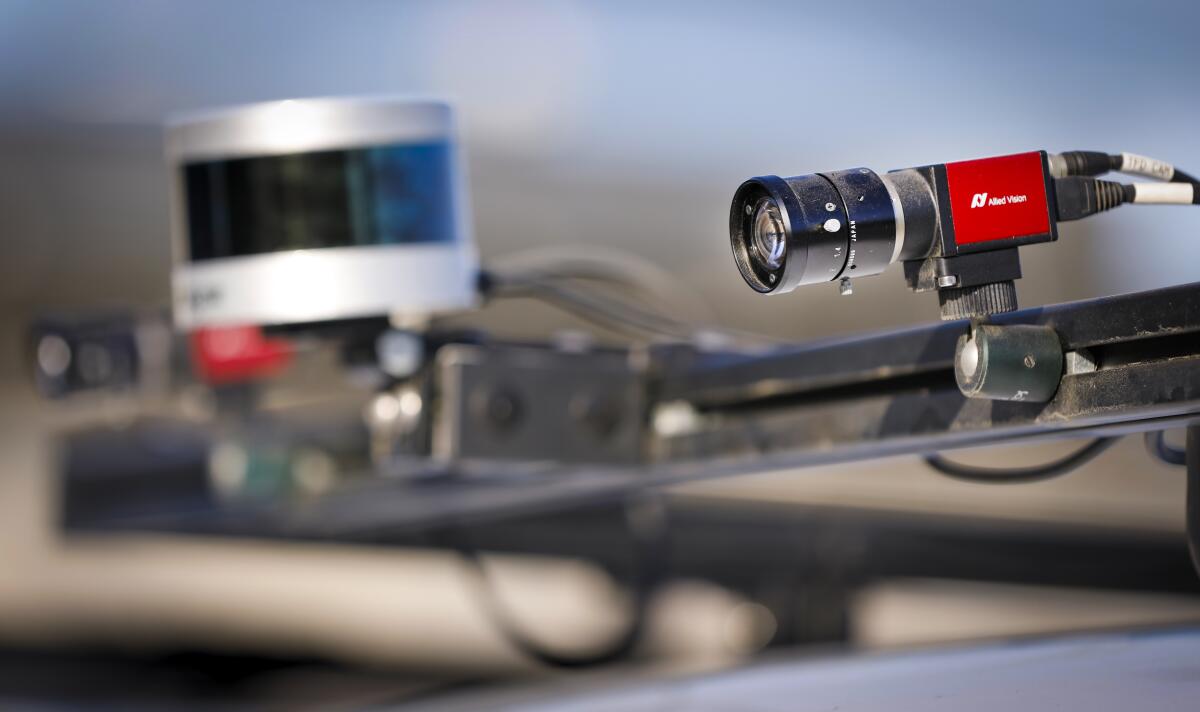

Meanwhile, car companies are logging huge volumes of information on automobile performance and the behaviors of drivers and passengers inside the cars, which now bristle with so much technology that some call them iPhones on wheels. And there are few restrictions on how they can use all those data. No laws preclude manufacturers and software providers from reselling driver and vehicle data to third parties, according to the Congressional Research Service.

Even minor crashes provide data that researchers and regulators will find useful as the technology develops, said Bryant Walker Smith, a specialist in autonomous vehicle law at the University of South Carolina.

“Companies should not be drawing this line,” he said. “Researchers need this data, how these vehicles are interacting with and coexisting in the world with human drivers and other road users.”

Under the NHTSA order, any vehicle equipped with driver-assist technology, such as Tesla’s Autopilot, and any company testing or deploying fully driverless robot cars must report every serious crash on the day it occurs. A death, an injury that requires hospital treatment, an air bag deployment or the need to have a battered car towed away all qualify a crash as serious.

The self-driving lobby wants more time to report such crashes and wants the towing trigger removed. In its letter, the group said that “it is often standard procedure” to have robot cars towed away from even minor crashes “where the vehicle is rear-ended at low speed.”

Whereas vehicles with driver-assist technology require reports only for serious crashes, companies testing or deploying driverless cars must also disclose any crash in a monthly report.

The Self-Driving Coalition wants that requirement scrapped, with the argument that data on minor crashes are of “limited value” to NHTSA. Wolf said the reporting requirements for driverless cars and driver-assist cars should be the same.

Low-speed rear-end collisions are one kind of accident Wolf’s group considers too minor to track. But human drivers ramming into the rear end of a robot car is the most common kind of autonomous vehicle crash, at least according to data collected by the California Department of Motor Vehicles. In 2021 through early December, out of 91 reported crashes, 50 were rear-enders.

Is that because robot cars are more hesitant to make turns into traffic than human drivers are, confusing the drivers behind them? Are rear-enders into robot cars more or less common than similar human-to-human crashes? Those are the kind of questions researchers and policymakers need to be asking as more robot cars hit the roads, Smith said.

Data collection “should be broad and should not hinge on a company’s conclusion as to whether an incident rises to a certain level, or — worse — who is at fault,” Smith said.

The letter from the safety groups notes that rules on data collection in the past have been “heavily influenced by manufacturers objecting to reasonable data collection,” leaving safety regulators and researchers short of the knowledge needed to craft public policy.

As to the administrative burden the coalition says its members will suffer under the reporting requirements, NHTSA pegs the industry labor cost of compliance at about $1 million a year. The industry will take in about $109 billion in investment funding through 2025, according to consulting firm AlixPartners. Projected annual revenue 10 years out for the autonomous vehicle industry ranges in the hundreds of billions of dollars — and the financial benefits of controlling the personal data of “iPhones on wheels” has yet to be determined.

More to Read

Inside the business of entertainment

The Wide Shot brings you news, analysis and insights on everything from streaming wars to production — and what it all means for the future.

You may occasionally receive promotional content from the Los Angeles Times.