Lip-syncing app TikTok will pay $5.7-million fine to settle child-privacy allegations

- Share via

Social media app Musical.ly — now known as TikTok — agreed to pay a $5.7-million fine to settle federal allegations that it illegally collected the names, email addresses, pictures and locations of children younger than 13.

The fine is a record-high penalty for violations of the nation’s child privacy law. It’s the result of a settlement between the Federal Trade Commission and Los Angeles-based TikTok, which merged with Musical.ly in 2018, over allegations of illegal collection of children’s data.

The TikTok app, like Musical.ly before it, enables users to make videos of themselves lip-syncing to millions of songs, including from children’s movies, and is broadly popular among adults and children. TikTok is owned by Chinese company ByteDance.

The FTC said TikTok had 65 million users registered in the United States, and as of Wednesday, it was the fourth- and 25th-most popular free app on Google and Apple devices, respectively. The illegal data collection alleged by the FTC predates the Tiktok-Musical.ly merger and, according to TikTok officials, is no longer occurring.

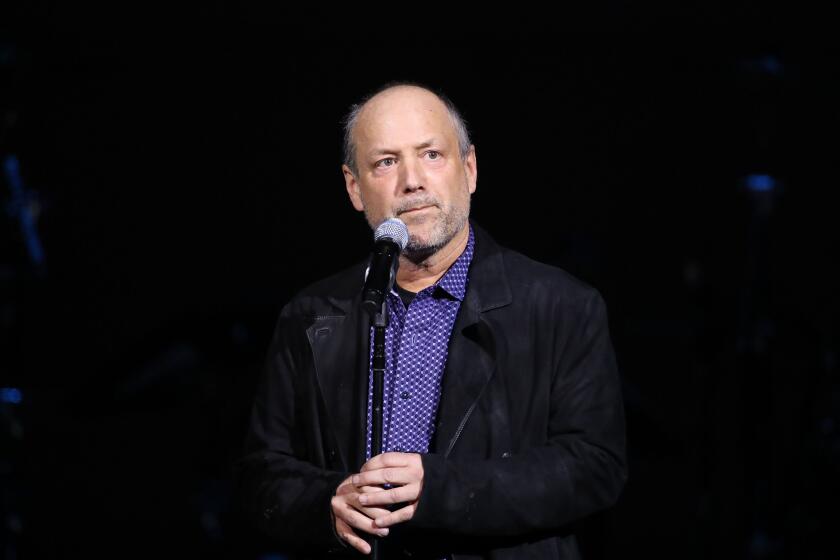

“The operators of Musical.ly knew many children were using the app but they still failed to seek parental consent before collecting names, email addresses, and other personal information from users under the age of 13,” FTC Chairman Joseph Simons said in a news release Wednesday. “This record penalty should be a reminder to all online services and websites that target children.”

The 1998 law, called the Children’s Online Privacy Protection Act, sharply limits the collection of personal data of online users younger than 13, but regulators in the past have not been sure how to apply the law to general-interest sites, as opposed to ones specifically directed at children. The law forbids online services from collecting data on children unless their parents give explicit permission, but it covers only services directed at children or ones that have “actual knowledge” that children are using them.

Musical.ly debuted in 2014 and operated out of offices in Shanghai and California. When counting its successor TikTok, the app has been downloaded more than 200 million times. The original Musical.ly app required users to provide their names, email addresses and phone numbers and to post a profile picture. Until October 2016, the app also collected the locations of users and allowed others to view which users were within a 50-mile radius of them, the FTC said.

The FTC noted in its filing that many TikTok users list their ages in short bios they post with their accounts, meaning that the app has “actual knowledge” that they are under 13. The FTC also reported receiving thousands of complaints from parents of young children using the app.

In response, TikTok said Wednesday that it would require new users to verify their ages and prompt existing users to verify how old they are. Users under 13 would be able to access a “limited, separate app experience” that meets U.S. restrictions on children’s privacy, the company said in a blog post. Children using the new app will be able to watch videos but won’t be able to share their own videos, make comments, maintain a profile or message other users.

“We are continuing to expand and evolve our protective measures,” TikTok said.

While the FTC voted 5-0 to accept the settlement with TikTok that resulted in the $5.7 million fine, the commission’s two Democrats said in a joint statement that the FTC should have held company executives personally accountable.

The Democratic commissioners, Rohit Chopra and Rebecca Slaughter, called the fine a “big win” to protect children online but also said in a statement, “As we continue to pursue violations of law, we should prioritize uncovering the role of corporate officers and directors and hold accountable everyone who broke the law.”

Privacy advocates said the FTC had not gone far enough to penalize TikTok, especially in an era when popular general-interest sites such as YouTube and games such as “Fortnite” are extremely popular among children.

“The FTC has given a gentle slap on the wrist, and it underscores how the agency has failed to enforce the only consumer privacy law it’s responsible for on children,” said Jeff Chester, the executive director of the Center for Digital Democracy. “TikTok and others have been failing to comply with [the 1998 children’s privacy law] for years, and the FTC has been reluctant to challenge the privacy-invasive practices of the companies that target children. This is too little, too late.”

A coalition of consumer and privacy groups filed a complaint with the FTC last year alleging that YouTube routinely violates the 1998 law. The commission — as is its standard practice when receiving complaints — has declined to comment on whether it is pursuing an investigation.

The TikTok case began with a referral from the Better Business Bureau’s Children’s Advertising Review Unit.

Timberg and Romm write for the Washington Post. The Associated Press was used in compiling this report.