Kicking racists off social media doesn’t threaten free speech. It protects it

- Share via

For years, people have claimed that the taming of the Internet was nigh. Whether cause for lamentation or celebration, the Wild West of content that stretched, unhampered by natural boundaries of river, mountain or sea, would be increasingly carved up and tied down by capitalism, privacy concerns, and technological reality.

Soon the gold rush of freedom, of instant fame and fortune, would be over — co-opted by corporations, governmental regulation and way too many cosmetic lines.

But not until recently did we consider the basic metaphorical issue. In the last few months, indeed the last few days, the internet has been forced to acknowledge what that ”Wild West” label really means: a mythology of individualism, iconoclasm and opportunity that almost always veils — and often encourages — racism, bigotry, chicanery and abuse.

A loose network of Facebook groups that took root across the U.S. in April to organize protests over coronavirus stay-at-home orders have new targets.

For many who lived through it, there was nothing romantic about the “taming” of the American West, with its obliteration and “relocation” of Native Americans, its spread of white Christian authority, its reliance on immigrants, including Black Southerners, fleeing oppression only to be met with similar bigotry. The Civil War was fought, in part, to keep Western states from becoming slave states; even so the beauty and possibilities of the “new” land were regularly blighted by the the same systems that marred the old.

And so it has been with the internet, as a flurry of corrective actions have shown.

In the last week alone:

- The Google-owned platform YouTube finally banned six white supremacist channels, including those belonging to former KKK leader David Duke and Richard Spencer, and demonetized longtime platform star Shane Dawson after he acknowledged that he had often used blackface, racist humor and inappropriate commentary.

- Reddit, which is owned by Condé Nast’s parent company, Advance Publications, banned thousands of communities that violated the company’s hate speech policies, including “The Donald,” a subreddit devoted to boosting support for President Trump through a panoply of racism, sexism, manipulated news and conspiracy theories.

- More than 500 companies, including Coca-Cola, Microsoft, Hershey, Adidas, Clorox and Ford, pulled their advertising from Facebook and Instagram as part of the Stop Hate for Profit initiative, demanding that the platforms tighten restrictions on misinformation and bigotry.

Individually, each action could be construed as an inevitable, and even in some cases conservative, response to the Black Lives Matter movement which, after the police killing of George Floyd in Minneapolis, sent millions protesting across every state of the union and around the world.

Indeed, the most shocking part of the announcement that professional racists like Duke and Spencer were being kicked off YouTube was the revelation that they were still on YouTube — the platform had vowed to ban white supremacists and practitioners of hate speech last June.

Some conservatives want to make it harder for Big Tech to remove offensive content. New offensive pro-Trump ads show why that’s a bad idea.

But taken as a pattern, it seems the barons of the internet are finally acknowledging that they do not exist in an alternate universe, outside standard rules and regulations.

In many ways, Dawson’s censure may turn out to be the biggest news, with a much broader impact on the YouTube and influencer communities and their young audiences. As Dawson said in his apology, his racism was even more toxic because it was not couched in white supremacy but in humor and drama aimed at young people looking for an alternative to legacy media.

And he is not the only one being re-evaluated through the lens of current events. David Dobrik, Liza Koshy and other YouTubers have also recently apologized for racist humor or insensitivity.

Increasingly, problematic old posts are being resurfaced, with new or rekindled outrage. Controversy and “authenticity” drive the influencer marketplace, particularly on YouTube, where feuds result in a reconciliation, mistakes in public apologies, with dizzying regularity and are often naked bids for attention and more followers, which, as in all media, can translate into profit.

Dawson’s multimillion-dollar success was based, in part, on his ability to rebound from each controversy with “authenticity,” which often involved a long video or social media post from him about the “toxic” atmosphere that hangs over social platforms that are fueled by gossip (or “tea”), judgment and cancel culture. In fact, Dawson’s racist past was revisited as he tried to extricate himself from another drama. (This one involved YouTube beauty mavens Jeffree Star and James Charles, so complicated and yet banal that I cannot bear to explain, even if I were able; Jezebel had a go, however.)

This time, however, an apology video proved to be the opposite of enough. A video in which Dawson mimed masturbating to a photo of then-11-year-old Willow Smith shocked many, and prompted angry reactions from mother Jada Pinkett Smith and brother Jaden Smith.

Dawson’s use of blackface and racist humor also was, by his own admission, too frequent to be considered “a mistake,” especially to a world grown weary of similar apologies.

Social media has, for many, become a replacement for traditional media, news and entertainment, in part because platforms like Twitter were seen as democratic; accessible by all and gate-kept by none, they were the ultimate expression of free speech.

Indeed, the protests over George Floyd’s death might not have expanded so widely if not for social media, which allowed the video of his terrible final minutes to circulate to millions. Social media has become a very effective tool of social policing, especially of the police. It’s our smartphones that give citizens the capability of documenting everything from Costco Karen to police firing tear gas at clearly peaceful protesters. It’s social media that allows those videos to go viral.

But as the Mueller report revealed, the lack of even basic gate-keeping can backfire and cause serious personal and societal damage. Whatever you believe about the president’s involvement, there is incontrovertible evidence that Russian operatives used Facebook and Twitter in an attempt to manipulate the 2016 election.

Last month, Twitter, bowing to pressure over the spread of misinformation and hate speech, began adding labels to tweets it considered inaccurate or particularly incendiary, including several issued by the president. In response, many Trump supporters are turning to a new platform, Parler, which has no such labels.

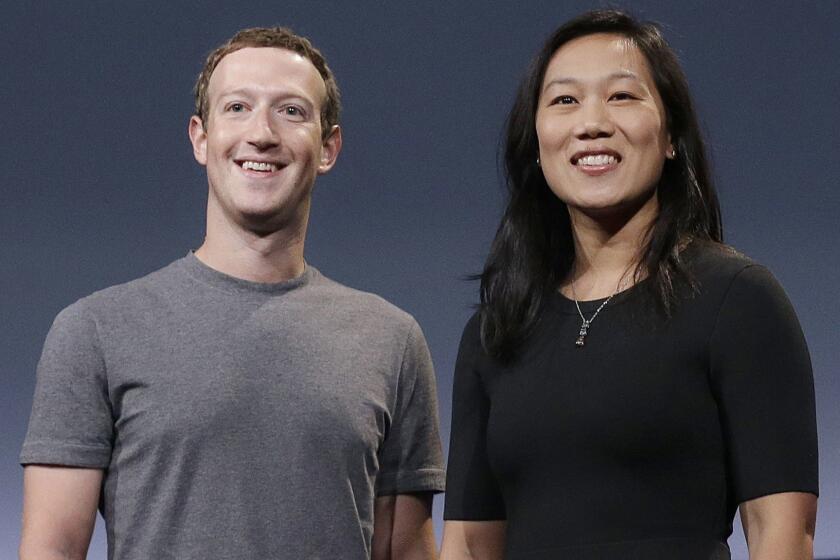

Facebook has refused to initiate any similar labels, though it does have a 27-page “community standards” guideline that prohibits the use of hate speech, incitement to violence and the spread of misinformation (although staff members recently protested CEO Mark Zuckerberg’s seeming refusal to apply these to President Trump).

Already battling unprecedented discontent among Facebook employees, Zuckerberg is under fire from employees and beneficiaries of his own charity.

In response to the advertising boycott, the site says says many accounts breaking that policy have already been shut down. Zuckerberg has said he will meet with Stop Hate for Profit leaders, but that his opposition to tightening restrictions remains: The advertisers will be back, he has said, and the site will not change because it is not Facebook’s business to fact-check or police its patrons.

It is true that under Section 230 of the Communications Decency Act, made law 25 years ago, no social media platforms can be held liable for any statements made on the platform, or any subsequent actions taken because of those statements. But the law also allows those platforms to institute their own set of rules.

Social media platforms are all businesses, owned and operated by people who often make quite a bit of money doing so. “Freedom” is something these companies sell; free speech is a guaranteed constitutional right, but Facebook, like Costco, is private property — you can be told to take your speech elsewhere at any time.

And slowly, finally, that is what is beginning to happen. Kicking a few people off YouTube is not going to end racism any more than toppling a few statues will. But re-examining this country’s mythologies, around the Founding Fathers, the Confederacy or the “settling of the West” just might — and applying universal social standards to the social media posts and videos that make many people a great deal of money certainly will.

Free speech only works if everyone is actually free.

More to Read

The biggest entertainment stories

Get our big stories about Hollywood, film, television, music, arts, culture and more right in your inbox as soon as they publish.

You may occasionally receive promotional content from the Los Angeles Times.