Tesla’s Smart Summon is a potential self-driving nightmare, and regulators are ignoring the risk

- Share via

Here we go again. In the rush to roll out driverless cars, Tesla is playing fast and loose with public safety by putting untested, uncontrolled autonomous vehicles on city streets.

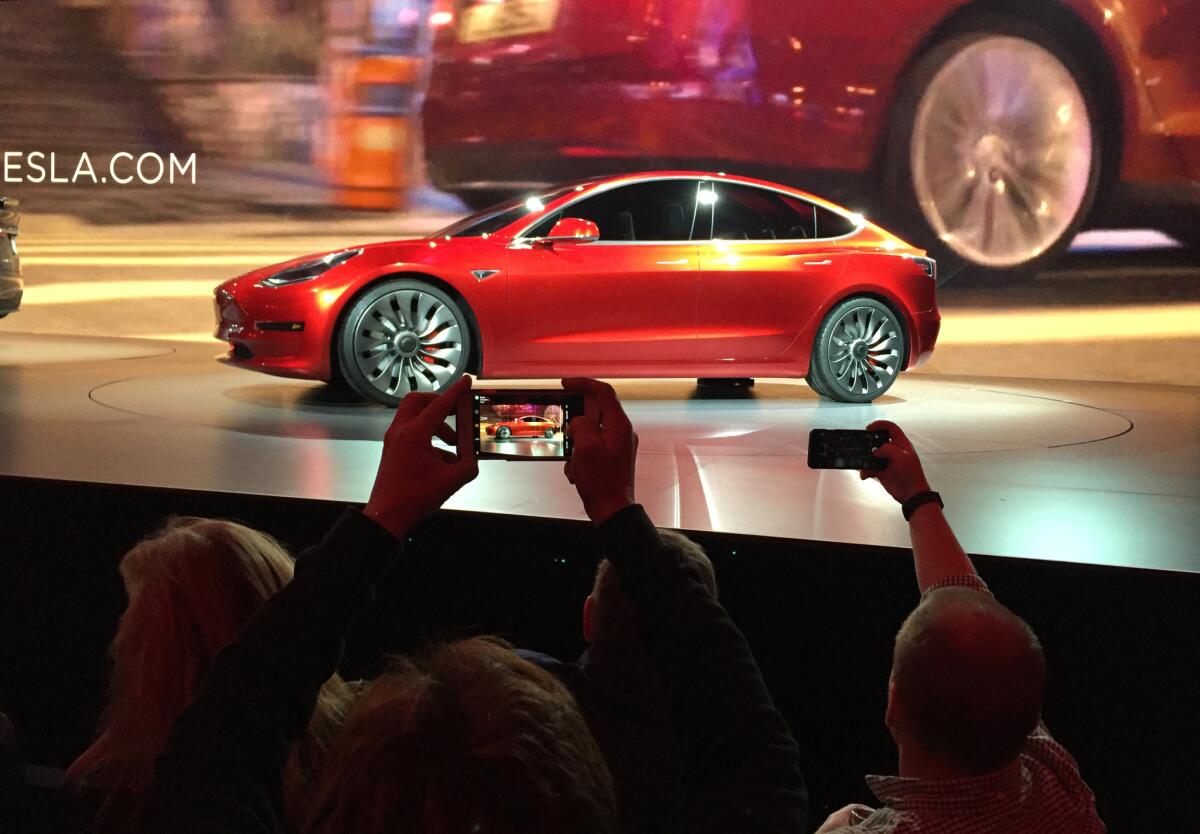

Last month the car company released Smart Summon, a software update that allows Tesla owners who have purchased a “full self-driving” package to use a smartphone app to command their vehicle to turn itself on, pull out of a parking space and drive to the smartphone holder’s location. The app works on Teslas parked up to 200 feet away.

Tesla beamed Smart Summon to customers with instructions to use only in private parking lots and driveways, and only if the app user can see the car at all times “because it may not detect all obstacles.” Oh and yes, “be especially careful around quick moving people, bicycles and cars.” You think?

Of course, videos soon appeared showing driverless Teslas cruising through busy shopping center parking lots, driving on public roads and traveling out of sight of their operator, Times reporter Russ Mitchell found. Some videos show successful trips by the unmanned vehicles, while others show near collisions and snafus with the system.

Apparently, it’s all perfectly legal. And that’s the problem.

The federal government’s transportation safety regulator, the National Highway Traffic Safety Administration, says it is keeping an eye on the Smart Summon system and “will not hesitate to act” if it finds evidence of a safety-related defect. That’s in keeping with the hands-off approach to autonomous vehicles taken by the federal government, which has adopted no national rules governing the deployment and performance of driverless technology.

There are rules for driverless cars at the state level, but they vary from state to state. California, which has been a leader in trying to sort through the complexities of the coming driverless revolution, developed some of the first regulations to guide the testing and deployment of truly autonomous cars on public streets.

Only one company — Waymo, the self-driving division of Google parent company Alphabet — has a permit from California to test autonomous cars without a human inside. Tesla and 62 other companies have permission to test autonomous cars with a person on board.

That’s why it’s so surprising and disappointing that the California Department of Motor Vehicles is going along with this self-serving experiment in self-driving in the public space.

The DMV made the mystifying decision that a car driving itself through a parking lot is, in fact, not “autonomous technology” subject to state regulation. A DMV spokesman compared Smart Summon to other advanced driver-assist features on the market. And he told The Times that because Smart Summon is controlled by a human wielding a smartphone, it doesn’t count as autonomous under state regulations.

That reasoning opens a can of worms. What happens if or when Tesla develops Smart Summon to operate over a range longer than 200 feet? The company has already promised to add automatic driving on city streets by the end of the year, along with traffic-light and stop-sign recognition. If people can summon their Teslas from across town with their smartphone, is the technology still off-limits for regulation by California?

Plus, there is a big difference between autonomous features — such as adaptive cruise control and blind spot monitoring — that are designed to help the driver safely manage the car and Smart Summon, in which the driver cedes control to the computer after turning the system on.

And who will ensure that Tesla owners are using this new technology safely? Tesla has been far more aggressive than its rivals in making cutting-edge driverless technology readily available to its customers. The company has already come under scrutiny by regulators for its Autopilot system after two fatal crashes involving Tesla’s driver assistance system.

The bigger problem is that federal regulators have refused to adopt rules for the testing and deployment of driverless cars. Current policies let the car’s manufacturer decide when the vehicle is safe enough for public use. But the public streets should not be turned into laboratories for private company experiments, especially not when the results can be lethal.

More to Read

A cure for the common opinion

Get thought-provoking perspectives with our weekly newsletter.

You may occasionally receive promotional content from the Los Angeles Times.